Apr 02, 2019 By Team YoungWonks *

In our previous blog (https://www.youngwonks.com/blog/Self-driving-cars:-what-autonomous-driving-technology-can-do-today), you read what autonomous/ automated driving are all about, their advantages and disadvantages and what this technology means for several industries. In this blog, we shall take a look at how this autonomous/ automated driving technology works.

At one point of time, a self-driving (autonomous) car, also known as a driverless car, would have been dismissed as a work of science fiction. But as it happens, with companies such as Tesla, Google/ Alphabet’s Waymo, General Motors (GM), Audi, BMW and even Mercedes Benz investing in autonomous driving, this is fast becoming a reality. In fact, according to The Telegraph, the driverless technology industry is expected to be globally worth £900 billion by 2025 and is said to be growing annually by a whopping 16 per cent.

All of which brings us to the question: how do driverless cars work?

To begin with, there are various systems that work with each other so as to control a driverless car. So if we want to understand how an autonomous vehicle works, we first need to look at the functional architecture of the said vehicle. Now the functional architecture is to the vehicle what the anatomy diagram is to us humans. In other words, it refers to how the major parts of the vehicle work together so as to achieve the mission of self-driving without flouting any legal or ethical codes.

Autonomous car vs traditional car

Now before we look at how an autonomous/ self-driving car functions, let’s look at how a traditional car - that needs a human driver at the wheel - works.

In a traditional car, the control of the car rests with the human driver who sees objects in the surroundings with his/ her eyes, processes this information with his / her brain and then the brain passes this information on to the driver’s limbs (arms and legs) which then carry out the appropriate tasks / commands such as braking or accelerating depending upon the situation.

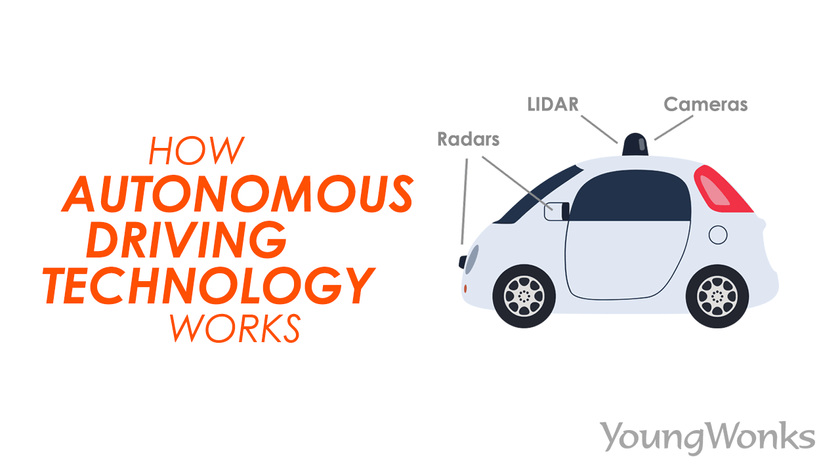

As opposed to this, in an autonomous car, the human driver is taken out of the equation; here the car is - to put it simply - controlled by a computer. Here it’s the sensors which perform the function of the eyes - i.e. they detect and observe the objects in the surroundings. This information is then passed on to the brain equivalent of the autonomous car - the computer - which then transmits the appropriate instruction to the car’s actuators, which in turn are the arms and legs equivalent here. This means it’s the actuators which carry out the task of braking or accelerating.

Sensors

This brings us to a significant component of the autonomous car - the sensors. Sensors indeed play a significant role when it comes to the functioning of an autonomous vehicle. They help detect and observe the objects in the surroundings which is a key element when it comes to the smooth operation of such a self-driving vehicle. There are different kinds of sensors that are being used by autonomous cars today; each of them has its own unique features. Here we take a look at them.

a. Cameras

Cameras detecting cars on the road (above)

Cameras in autonomous driving work in the same way our human vision works and they use technology similar to the one found in most digital cameras today. Most autonomous cars today have an average of 8 cameras fitted in as this multitude of cameras can scan the environment better and from different angles (read: from the sides and the front and back). Having so many cameras also provides the car with good depth perception that is essential to a good driving experience. Tesla cars, for instance, rely heavily on cameras as they help the cars build a 3D map of their surroundings.

Advantages: Cameras are easier to incorporate in cars as video cameras are already easily available in the market. This means an autonomous carmaker will not need to work from scratch. They also aren’t blind to weather conditions such as fog, rain and snow; all that a human driver can navigate, a camera-fitted autonomous car can too - be it reading street signs or interpreting colors. Moreover, they can be easily hidden within the car’s structures, thus not compromising on the car’s appeal. They are also way less expensive when compared to sensors such as lidars. So using cameras helps bring down costs of self-driving cars.

Disadvantages: Cameras, just like humans, do not produce good results when lighting conditions change and leave objects obfuscated. So strong shadows or bright lights either from the sun or an oncoming car can confuse the cameras. Cameras also typically send what is essentially raw image data back to the system, unlike say a lidar where exact distance and location of an object is provided. That means camera systems will need to depend on powerful machine learning (neural networks or deep learning) computers that can process those images to determine exactly what is where.

b. Lidars

LIDAR stands for Light Detection and Ranging and is a remote sensing method that uses light in the form of laser beams so as to measure ranges (variable distances) to the earth. So basically a lidar sends out millions of laser light signals per second and measures how long it takes for them to bounce back.

How does it do it? The answer is rather simple. We know that the speed of light is 299,792,458 meters per second. So when a lidar tracks the time taken for a beam of light to travel and hit an object and bounce back, we can multiply the time taken and the speed of light to calculate the distance travelled by the beam of light. But bear in mind that since the beam has travelled both to the object and back to the lidar, we need to divide this distance by 2 in order to arrive at the exact distance between the lidar of the autonomous vehicle and the object hit by the beam.

This means that a lidar makes it possible to create a very high-resolution picture of a car’s surroundings, and in all directions, if it’s placed in the right spot (like the top / roof of a car where it keeps rotating to scan the surroundings). It continues to be this precise even in the dark since the sensors are their own light source.

Advantages: Lidars are accurate; they offer a 360 degree view and - in 3D imagery at that - of the car’s surroundings as they detect things across a long distance. They are also very good at capturing information in different types of ambient light (night or day) as they are not dependent on external light sources. This is a key advantage because cameras are worse in the dark, and radar and ultrasonic sensors aren’t as precise either. Lidars also save computing power as they can immediately tell the distance to an object and direction of that object. A camera-based system, on the other hand, needs to take images and then analyze them to arrive at the distance and speed of objects, thus taking up far more computational power.

Disadvantages: On the downside, lidars are rather expensive - way more than other sensors, in fact. At one point of time, they were as expensive as 75,000 USD. And while they are cheaper than that (now down to around 7500 USD), they are still not cheap. Plus they are quite bulky, as they involve several moving mechanical parts (for now, at least). In many systems, lidars cannot yet see well through fog, snow and rain; nor do they give information that cameras can easily do, like the words on a sign, or the color of a light.

c. Radars

RADAR, short for Radio Detection and Ranging, is also a remote sensing method, except that instead of using light, it uses radio waves / frequencies so as to measure ranges (variable distances) to the earth.

In other words, radars uses radio waves to detect objects and determine their range, angle, and/or velocity.

And how does it do it? Again, we know that radio waves being electromagnetic waves, their speed is the same as the speed of light, i.e. it is 299,792,458 meters per second. So when a radar tracks the time taken for a radio wave to travel and hit an object and bounce back, we can multiply the time taken and the speed of the radio wave to calculate the distance travelled by the wave. But bear in mind that since the wave has travelled both to the object and back to the radar, we again need to divide this distance by 2 in order to arrive at the exact distance between the radar of the autonomous vehicle and the object hit by the radio wave.

Advantages: Radars are not exactly a new technology, which means they have only become better with time and they are fairly inexpensive, thus allowing autonomous car companies to cut costs. They are quite reliable in the long-term as they do well in weather conditions such as fog, rain, snow and dust. They can typically see a longer distance than lidar which is important for vehicles such as trucks.

Disadvantages: Radars are less angularly accurate than lidar as they lose the sight of the target vehicle on curves. Its object resolution then is poor and it can get confused if multiple objects are placed very close to each other. For instance, it can consider two small cars in the vicinity as one large vehicle.

d. Ultrasonic sensors

The ultrasonic sensor works on a principle similar to radars and lidars, except that it does so using ultrasonic (sound) waves. The sensor head emits an ultrasonic wave and receives the wave reflected back from the target (the object being detected). Then the sensor measures the distance to the object by measuring the time between the emission and reception. Distance to the object is calculated by first multiplying the speed of sound (343 meters per second) into the time taken for the sound wave to hit the object and come back; and then dividing the value by 2 as the time here includes the time for go-and-return.

Advantages: Ultrasonic sensors reflect sound off of objects, so the color or transparency have no impact on the sensor’s reading. Similarly, dark environments have no adverse bearing on the sensor’s object detection abilities. They are also fairly easy to use and easy on the pocket; nor are they highly affected by dust, dirt, or high-moisture environments. Moreover, they can easily interface with microcontrollers.

Disadvantages: Their readings get affected by objects covered in soft fabrics that absorb sound and by changes more than a 5-10 degree variation in the weather condition. But the biggest disadvantage is their limited range; they can only detect objects within the range of three to 10 meters as opposed to lidars and radars which have a higher range (lidars have a range of upto 300 meters). This means ultrasonic sensors are ideal for detecting only nearby objects and not farway ones.

e. Inertial Measurement Unit (IMUs)

An inertial measurement unit (IMU) is an electronic device that calculates and reports an object’s specific force, angular rate, and sometimes the magnetic field surroundings the body, by using a combination of accelerometers and gyroscopes, sometimes also magnetometers. So basically an IMU can detect if the car is already moving or not, in what direction (backward or forward), is it turning and so on. While the accelerometer detects the direction (backward or forward), the gyroscope detects rotational motion. The latter are also used in phones, which is why images and videos change from portrait mode to landscape mode when we turn the phone; it’s the gyroscope that helps in that detection. Similarly, the accelerometer in phones helps the phone wake up when the phone is moved; it also helps change the song when we shake the phone in a certain manner. Together these accelerometers and gyroscopes form the inertial measurement unit/system.

Advantages: IMUs are widely in use. They are typically used not just in self-driving vehicles but also to maneuver aircraft and spacecraft, including satellites and landers. An IMU even lets a GPS receiver work when GPS-signals are unavailable, say in tunnels, inside buildings, or when there is electronic interference.

Disadvantages: A big disadvantage of using IMUs for navigation is that they usually suffer from accumulated error. Since the guidance system is continually integrating acceleration with respect to time to calculate velocity and position , any measurement errors, however tiny, add up over time. This leads to an ever-growing difference between where the system perceives it is located and the actual location.

Autonomous Driving Today - Google vs Tesla

While there are many players in the autonomous driving industry today, it’s Waymo (Google) and Tesla that have pioneered the field so far. Others - such as Audi, Mercedes and General Motors through its arm Cruise Automation - are yet to make as many strides.

Both Tesla and Waymo are aiming to collect and process enough data to create a car that can drive itself. But they are trying to do this in different ways and on different scales.

Tesla

Tesla is looking to take advantage of the several hundreds of thousands of cars it already has on the road by collecting real-world data about how those vehicles perform (and how they might perform) with Autopilot, its current semi-autonomous system. In fact, its system is said to be based on monocular forward-looking camera technology from Mobileye. This means that Tesla’s system is not really able to localize itself on a map, at least not to the degree needed to achieve lane keeping (the GPS isn’t as good as it is with Google). That said, the forward-looking camera can catch the location and curvature of highway lane makers, which is typically enough to ensure that the car stays in its lane and carries out basic lane change maneuvers. And because cameras alone are not ideal and lidars are rather expensive, Tesla still incorporates a radar at the front of their cars for additional input.

All in all, Tesla’s cars use eight cameras, 12 ultrasonic sensors, and one forward-facing radar. For Tesla, the emphasis has been on achieving a low-cost solution and it is said to be on track to automate driving up to 90 percent in the coming years. This also means that the remaining 10% of driving situations will need a driver and getting around those situations - making them driverless - will continue to be tricky.

Waymo

Waymo, Google’s self-driving car project, uses powerful computer simulations and feeds what it learns from those into a smaller real-world fleet. It has already simulated 5 billion miles of autonomous driving and it has achieved 5 million self-driven miles on public roads. This means it has achieved a fleet of fully self-driving - yes, driverless - cars on public roads. On December 5, 2018, Waymo launched its first commercial self-driving car service called Waymo One, where users in the Phoenix metropolitan area can use an app to request the service.

And as opposed to Tesla which is relying more on computing power, Waymo’s self-driving minivans also aim to reduce the load on their software load by using several lidars – at the front, on the side and on the roof. All in all, they use three different types of lidar sensors, five radar sensors, and eight cameras. So the autonomous driving system here relies on a 64-beam lidar to localize itself to within 10 cm on a detailed pre-existing map. It uses the lidar data - which is said to be very precise - to make a 360 degree world model that tracks and predicts movements for all nearby vehicles, pedestrians, and other obstacles. This in turn allows it to navigate intelligent paths through a complex highway or urban environments.

Autonomous Driving - The Road Ahead

Cheaper lidars

As seen above, a key difference between Tesla and Waymo is the use of lidar; the latter uses it and the former doesn’t. One of the primary advantages of lidar is accuracy and precision. In December 2017, automotive news website The Drive reported that Waymo’s lidar is so incredibly advanced that it can tell what direction pedestrians are facing and predict their movements. The car model Chrysler Pacificas fitted with Waymo’s lidar is said to see hand signals that bicyclists use to predict which direction the cyclists should turn. Of course, as mentioned earlier, lidars are typically expensive and if Tesla actually makes smoothly driving autonomous cars possible without lidar, it would be a big win for them.

But the fact so far remains that lidars - while still more expensive than other sensors - have become cheaper than earlier and are set to become even more cheaper. At one point, lidars were priced at 75,000 USD thus driving up the cost of the car itself. But with Google’s Waymo making its own lidars and not just for their vehicles but also for other automobile companies, lidar costs have reduced drastically to around 7,500 USD. What’s more, much like electronics, lidars are said to become cheaper in the coming months, even to around 5,000 USD.

V2V and V2I technology

Another future development that is expected in the autonomous driving car industry is the rise of V2X (Vehicle to Everything) / V2I (Vehicle-to-infrastructure) technology. V2V and V2I components will make it possible for the autonomous vehicle to interact with and get information from other machine agents in the environment, such as information transmitted from a traffic light that it has turned green or warnings from an oncoming car.

Electrified roads for charging vehicles

This month last year (2018), the world got its first electrified road for charging vehicles near Stockholm in Sweden. Essentially a stretch of about 2 km (1.2 miles) of a public road, the road has an electric rail embedded in it which then recharges batteries of cars and trucks driving on it. Moreover, the government’s roads agency is said to have already drawn a national map for future expansion. This move is said to help deal with the problems of keeping electric vehicles charged, and the manufacture of their batteries affordable.

Indeed, while such specialised roads may initially exist only in certain parts of the world, they will be a huge blessing for electric vehicles which won’t have to stop for recharging and this would in turn help reduce dependence on fuel. In addition to this, the roads will be a source of revenue generation for those maintaining them and the inductive wires / electric rails embedded in them.

The Future of Transportation: The Role of Coding in Autonomous Driving

The burgeoning field of autonomous driving is a brilliant example of how cutting-edge technology can reshape our everyday lives, making transportation safer, more efficient, and sustainable. At the heart of this revolution lies the power of coding and software development, skills that can be nurtured from a young age. YoungWonks offers Coding Classes for Kids that introduce students to the fundamentals of programming, a critical component in the development of autonomous vehicles. Our Python Coding Classes for Kids take it a step further, teaching a language that is pivotal in processing the vast amounts of data involved in self-driving technology. Additionally, our Raspberry Pi, Arduino and Game Development Coding Classes expose students to the hardware-software integration essential for building and understanding the mechanisms behind autonomous systems. Through such comprehensive learning experiences, YoungWonks aims to inspire the next generation of innovators who will continue to push the boundaries of what's possible in technology and transportation.

*Contributors: Written by Vidya Prabhu; Lead image by: Leonel Cruz