Oct 28, 2021 By Team YoungWonks *

What is edge computing? This is a term often used in tech circles, especially with regards to IoT (Internet of Things) devices today (read our blog about IoT here: https://www.youngwonks.com/blog/What-is-the-Internet-of-Things). In this blog post, we shall look at what the term edge computing means, why it is needed, its varied applications today, the many benefits it affords and the challenges associated with it.

What is Edge Computing?

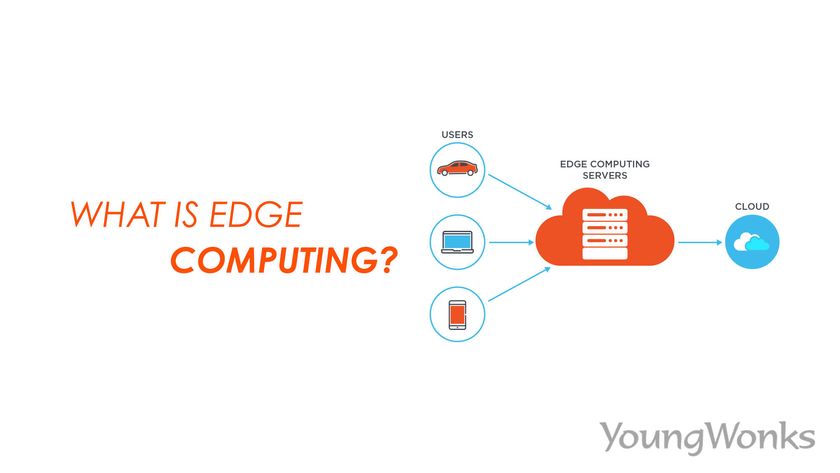

Edge computing refers to the deployment of computing and storage resources at the location where data is produced. This typically puts compute and storage at the same point as the data source at the edge of the network. With computation and data storage in such an edge location that's closer to the sources of data, response times tend to improve and bandwidth is saved.

For instance, a small enclosure with many servers and some storage could be installed on top of a wind turbine to collect and process data produced by sensors within the turbine. Another example can be a railway station placing a decent amount of compute and storage within the station itself to facilitate easier collection and processing of track and rail traffic sensor data. The results of such processing could be sent back to another data center for review, archiving and merger with other data results.

It is often mistakenly thought that edge computing and IoT are one and the same. Edge computing is in fact an alternative approach to the cloud environment aka cloud computing as opposed to the IoT. The former is a topology- and location-sensitive type of distributed computing, while IoT encompasses use case examples of edge computing. So, edge computing refers more to a certain architecture than a specific technology. Today, all the big three public clouds – AWS, Google Could Platform, and Microsoft Azure – are beginning to offer edge computing capabilities. So essentially, all of them are offering services of a mini datacenter, typically connected to IoT devices and deployed at the enterprise network’s edge rather than in the cloud.

The practice of edge computing can be traced back to content delivery networks created in the late 1990s to serve web and video content from edge servers that were located close to users. In the early 2000s, these networks went on to host applications aka apps and application components at the edge servers, resulting in the first commercial edge computing services that could host applications such as shopping carts, ad insertion engines, real-time data aggregators and dealer locators.

How Edge Computing Works

Edge computing is all about the location. In a typical enterprise computing setup, data is generated at a client endpoint (say, a user’s computer) and the data moves across the internet, through the corporate local area network (LAN), where it is stored and worked upon by an enterprise application, after which the results are communicated back to the client endpoint.

But with increasingly more people / businesses and devices connected to the internet and the resulting increase in the volume of data being produced, traditional data center infrastructures are finding it tough to keep up with the workload even as they suck up more computing power. This has paved the way for more and more enterprise-generated data to be created outside centralized data centers. It is important to bear in mind that moving so much data, especially in time- or disruption-sensitive situations can put a considerable amount og strain on the internet.

This is why IT architects are now focusing on a simple concept: if you can’t get the data closer to the data center, get the data center closer to the data. Thus, they are taking storage and computing resources from the data center and moving them to where the data is produced; i.e. the logical edge of the infrastructure. This also means that as a concept, edge computing is not exactly new - for it is rooted in decades-old ideas of remote computing, think remote offices and branch offices.

Why Edge Computing is Needed

Edge computing, also known as fog computing, aims to get past three main network limitations: bandwidth, latency and congestion or reliability; thereby offering high performance.

Bandwidth

Bandwidth refers to the amount of data that a network can carry over time, and it is usually shared in bits per second. All networks have a limited bandwidth, and the limits are stricter for wireless communication. Of course, network bandwidth can be increased to accommodate more devices and data, but this also means higher costs and it doesn’t exactly solve all problems as there is still a finite (albeit higher) limit to the data that can be transferred across the network.

Latency

Latency is the time required to send data between two points on a network. The communication may usually occur at the speed of light, but large physical distances and network congestion or outages can cause delays in moving data across the network. This too can have harmful effects as it in turn can delay important analytics and decision-making processes and reduces the system’s ability to respond in real time.

Congestion

This is a real issue as well. The internet may be a global network of networks, but the sheer volume of data involved with tens of billions of devices is large enough to even overwhelm the internet, thus creating high levels of congestion and leading to time-consuming data retransmissions. In other cases, network outages can aggravate existing congestion and even knock off communication to some internet users entirely.

With edge computing, all three problems shared above can be tackled better. Here, servers and storage is deployed where the data is generated, which means many devices get to operate efficiently over a smaller and more effective LAN with ample bandwidth used exclusively by local data-generating devices. Local storage collects and protects the raw data, even as local servers carry out essential edge analytics thereby helping make decisions in real time before sending results to the central data center. It is not surprising then that Gartner has predicted that by 2025, 75 percent of enterprise-generated data will be produced outside of centralized data centers.

Applications of Edge Computing

Edge Computing for Manufacturing

Edge computing has proven to be useful in manufacturing, especially industrial manufacturing. It is known to aid monitoring of manufacturing, enabling real-time analytics and Machine Learning (ML) at the edge to find production errors and enhance overall product manufacturing quality. It has also helped facilitate the addition of environmental sensors throughout the manufacturing plant, sharing insight into how each product part is assembled and stored and the duration for which they are typically in stock.

Edge Computing for Farming

Suppose there’s a business growing crops indoors without sunlight, soil or pesticides. These conditions typically decrease grow times by more than 60%. But using sensors - powered by edge computing - can help the business track water use, nutrient density and determine optimal harvest. The data thus collected can be analyzed to find the effects of environmental factors and continually improve the crop growing algorithms to ensure a better harvest.

Edge Computing for Better Healthcare

Healthcare too finds a place among edge computing solutions. With the healthcare industry increasingly relying on patient data collected from devices, sensors and other medical equipment, the edge computing model makes for a good choice as it facilitates the use of automation and ML to access the data and analyse it well, enabling medical professionals to take immediate action in case of emergencies.

Edge Computing for Workplace Safety

Edge computing can collate and analyse data from on-site cameras, employee safety devices and several other sensors to help businesses keep a check on workplace conditions and see to it that employees follow established safety protocols; especially in case of remote / dangerous workplaces such as construction sites or oil rigs.

Edge Computing for Retail

Retail businesses can also generate large data volumes owing to surveillance, stock tracking, sales data and other real-time business details. Edge computing can help assess such vast amounts of data and recognise business opportunities as well. And given that retail businesses can differ drastically in local environments, edge computing does make for an effective solution in that it offers local processing at each store.

Edge Computing for Network Optimization

With edge computing, one can measure performance for users across the internet and then use analytics to pick out the most reliable, low-latency network path for each user’s traffic, thus helping optimize network performance. Basically, edge computing can help guide traffic across the network for achieving optimal time-sensitive traffic performance.

Edge Computing for Autonomous Vehicles

Autonomous vehicles or self-driving cars need and generate around 5 TB to 20 TB data every day, including information about location, speed, vehicle condition, road conditions, traffic conditions and other vehicles. Moreover, this data needs to be aggregated and analyzed in real time, with the vehicle in motion. Such significant onboard computing can definitely gain a lot from edge computing. Plus, the data can help authorities and businesses manage vehicle fleets on the basis of actual conditions on the road.

Edge Computing for Augmented Reality (AR) and Virtual Reality (VR)

Edge computing, along with 5G networks, is said to have the potential to drastically transform AR & VR use cases. While 5G networks will lend ultra-low latency and high bandwidth, moving the data to the edge means that images will be rendered much closer to the end-user (compared to the cloud) further enhancing the use cases.

Benefits of Edge Computing

Autonomy

Edge computing is particularly useful when the connectivity is not reliable or the bandwidth is restricted due to the site’s environmental features. Oil rigs, ships at sea, remote farms or other remote locations (think rainforest or desert) are some examples. With edge computing, the compute work is done on site; at times, on the edge device itself. So it could be done on water quality sensors on water purifiers in remote villages, and transmit data to the data center only when connectivity is available. The local data processing thus reduces the amount of data to be sent, needing much less bandwidth or connectivity time.

Better edge security

It is easier to implement and ensure data security in edge computing. With computing being carried out at the edge, all passing through the network to the main data center can be secured through encryption, and the edge deployment itself can be toughened to put up a strong fortification against hackers.

Data sovereignty

Moving large amounts of data is not just technically imposing; there are also data security, privacy and other legal issues that crop up when the data moves across national and regional boundaries. With edge computing carrying out the computing at the source of the data, it becomes easier to comply with the relevant laws of the region that govern how data needs to be stored, processed and exposed. This info that is processed locally secures the sensitive data before sending it to the cloud or primary data center in another location.

Challenges associated with Edge Computing

Connectivity

Edge computing does help overcome usual network limitations, but it still needs some minimum level of connectivity. It’s imperative to ensure that the edge deployment accommodates poor or erratic connectivity and factors in a scenario when the connectivity is lost. Thus, successful edge computing absolutely requires addressing the issues of autonomy, AI (artificial intelligence) and planning for failures caused by connectivity issues.

Limited capability

Edge computing, as the name suggests, refers to computing at the edge. This comes with limited capabilities since the edge is not the main data center in the central location which is typically better equipped. And while deploying an edge infrastructure can be useful, the scope and purpose of this deployment needs to be clearly defined or it can take up too many resources.

Data lifecycles

Not only do we deal with a lot of data today, but also a big chunk of it is actually unnecessary. Take for instance, a medical monitoring device; here the data pertaining to the health problem is important but storing info about the patient’s regular lifestyle may not really be of much use. More often than not, the data in real-time analytics is short-term data that isn't stored beyond a certain time. Thus, enterprises using edge computing need to figure out what data to keep and what to discard after performing the analyses, in addition to storing the former as per the prevailing business and regulatory laws.

Security

With edge computing being used for IoT devices, it is essential to focus on proper device management, including enforcing policy-driven configuration and ensuring security in the computing and storage resources. Attention also needs to be paid to encryption in the data at rest and in flight, since IoT devices often lack sufficient security, especially those synced to an edge site.

Exploring Edge Computing with YoungWonks

Edge computing represents a significant shift in data processing and internet architecture, moving closer to the sources of data generation. This not only reduces latency but also decreases bandwidth use, enabling real-time applications that are critical in various sectors including healthcare, manufacturing, and smart cities. At YoungWonks, we believe in preparing the next generation to be at the forefront of such technological advances. Our Coding Classes for Kids introduce the foundational concepts necessary for understanding the complexities of edge computing. For students interested in developing the software that powers edge devices, our Python Coding Classes for Kids provide the programming skills needed to innovate and problem-solve efficiently. Meanwhile, those fascinated by the hardware aspect of technology can benefit from our Raspberry Pi, Arduino and Game Development Coding Classes, which offer hands-on experience in building and programming the devices that are essential to edge computing networks.

*Contributors: Written by Vidya Prabhu; Lead image by: Abhishek Aggarwal